Most businesses handle a tremendous amount of data on a daily basis, when one of the main challenging tasks during the ERP life cycle systems, is its capability to offer agile and prebuilt modern data integration patterns for complex business scenarios to communitate easily with other systems, and ensure continiuous data integration and data storage flows.

In Dynamics 365 FinOps we have multiple options for data integration between FinOps apps and third-party services, and then to any other system.

Here is the official Microsoft integration patterns list that are available.

Today’s scenario : Data package export through Power automate and Data management REST API

Today’s architecture will be about exporting D365FinOps data package by using Power automate to an Azure blob storage.

In this blog we will use the following elements :

- Dynamics 365 For Finance and Operations

- Creating a data export project in D365FinOps

- Power automate

- Automated cloud flow, that will push continiously exported data packages from Dyn365FinOps to the blob storage account

- Azure blob storage account

- The final destination of the exported packages

Before starting to create Dynamics 365 FinOps data export project, it’s essential to remind that we have two different options to use the data batch API pattern, let’s analyze each one.

Choosing the right intergration API based on your business scenario

The data management framework package API used to integrate Dynamics 365 FinOps application with other applications by using data packages, but which one i have to use ?

Data management API Vs Reccuring integrations API

There is two APIs that support file-based integration scenarios: the Data management framework’s package API and the recurring integrations API. Both APIs support data import and data export scenarios.

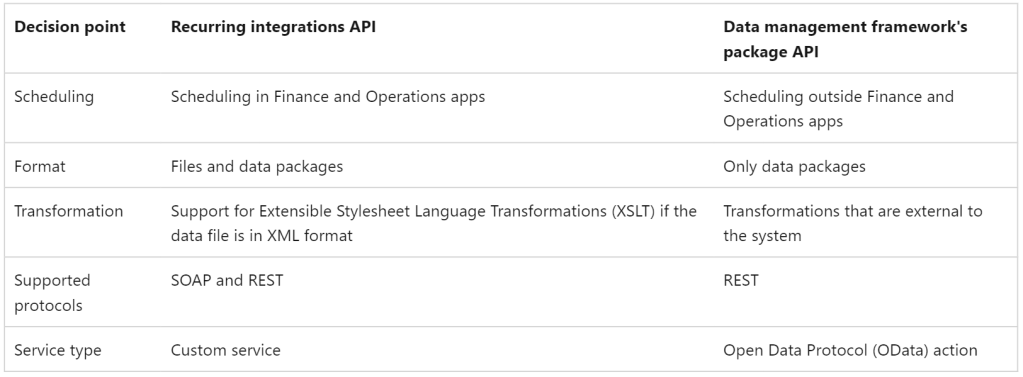

The following table describes the main decision points that you should consider when you’re trying to decide which API to use.

Note : in this blog we will use the data management package REST API.

Data export project in Dynamics 365 FinOps

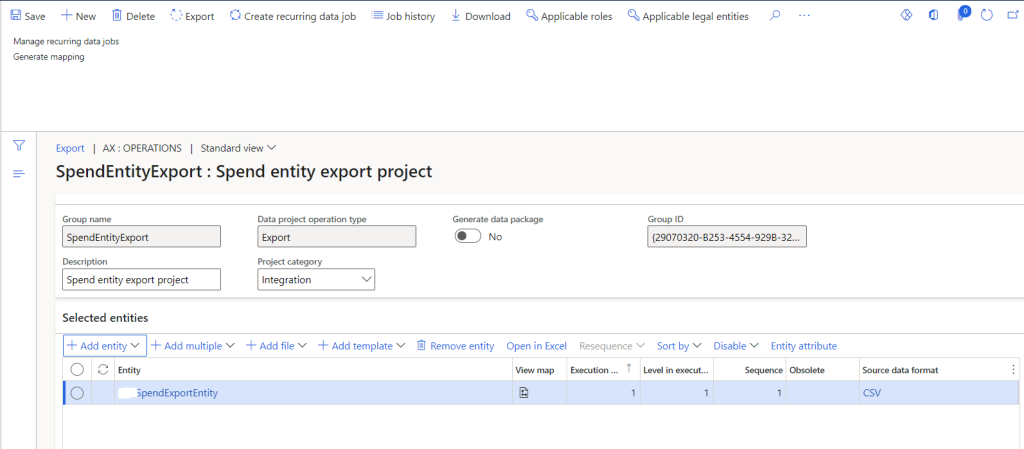

The first step in this scenario is to start by creating the Data export project, here i’m exporting the spend custom data entity in a CSV format.

Power automate flow to push export data package to the Azure blob storage

Once the data export project is created and saved, the Power automate times arrives to start the automated actions.

The goal here is to call automatically the data export project via the Export APIs from D365FinOps (Source) to the Azure blob storage (Target).

Now let’s create the flow actions step by step.

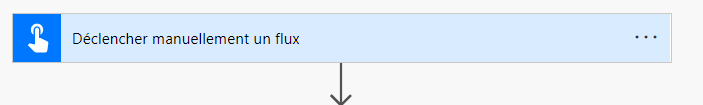

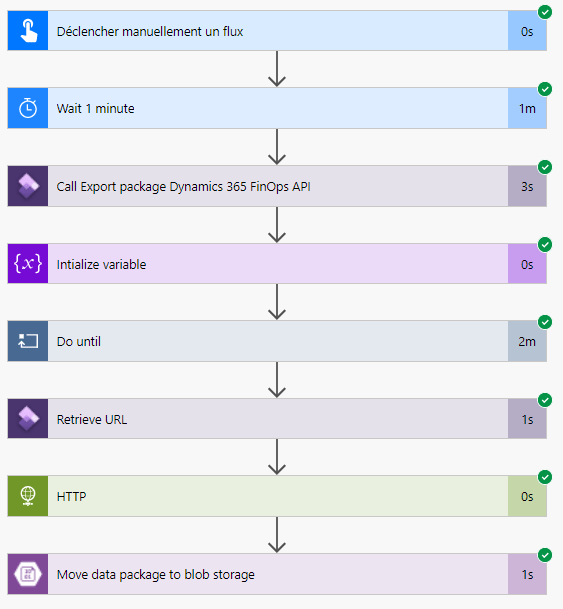

Manually trigger the flow

In this flow we are using manual trigger action to start the process, we can use a reccuring data export project in D365FinOps as well to schedule a continuous data export.

Note : One of the major decision points between choosing a reccuring data export API or data management API is the scheduling point, when the first option gives you the opportunity to schedule directly your data export package inside D365 FinOps.

while on the other hand, using the second option gives you the opprtunity to schedule your data export oustide D365FinOps app, which can be super useful in some scenarios if your export depend on other business scenarios.

You can start your Power automate flow by different trigger actions, like Power Apps.. etc.

Wait 1 minute action

Once we have configured the manual trigger action, the second step is to delay the flow process by 1 minute before moving towards the next steps.

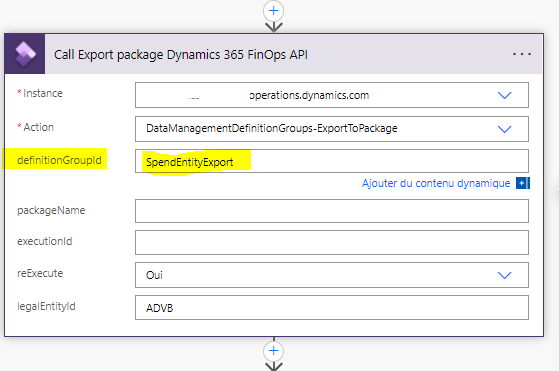

Call D365 FinOps export project via Data management API action

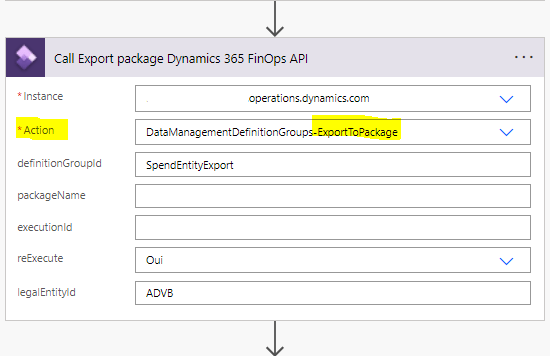

In this step we are calling the ExportToPackage DMF REST API to schedule the export of the data package.

- Action: represent the list of prebuilt DMF APIs, The DataManagementDefintionGroups-ExportToPackage API is used to initiate the export of our data package.

- DefinitionGroupId : represent the name of the exported data package

- As in this step we specify the export data project already created in our instance, the export data project must be created always before calling the API.

- If change tracking has been turned on, only the delta is returned (only new created or updated records will be considered)

- The output of this step will be ExecutionId : which is a string Id that represent the execution ID of the data export, it’s called also Job ID in the User interface.

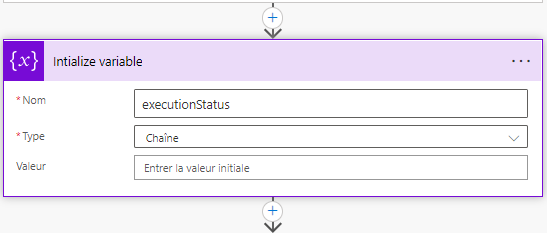

Initialize executionStatus variable action

Once we have called the export package API in the previous action, with an output result that represent the execution status.

In this step, we are going to create and declare the executionStatus string variable to store the execution status of the ExportToPackage API step.

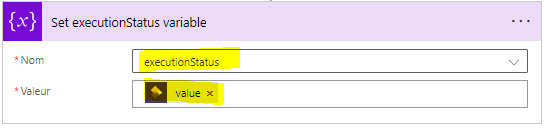

Do until executionStatus = Succeeded action

The goal of this step is to check the status of the data project execution.

- DoUntil action will continue checking the ExecutionStatus variable until is Succeeded.

- Inside DoUntil action we are calling GetExecutionSummaryStatus API to check the data export status.

- The execution status of each loop will be stored in Set executionStatus variable action

GetExecutionSummaryStatus

This API is used for both import jobs and export jobs to check the status of a data project execution job.

- The ouput of calling this API it’s an execution status

- Here are the possible values

- Unkown

- NotRun

- Executing

- Succeeded

- PartiallySucceeded

- Failed

- Canceled

- Here are the possible values

Get the exported package URL action

Once the package well exported (ExecutionStatus = Succeeded) the flow will continue towards the next step, to retrieve the package URL.

The goal here is to retrieve the package URL which will be used to push the package into the Azure blob storage account.

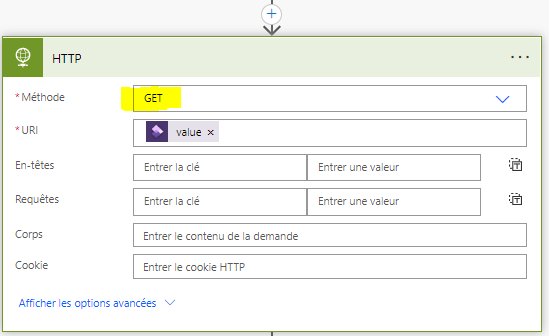

Download the exported package via HTTP action

In this step we are going to add an HTTP GET request to download the package from the URL that was returned in the previous step.

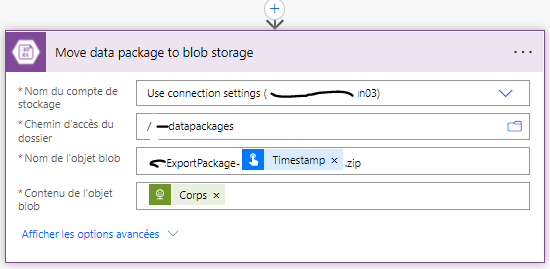

Move the data package in Azure blob storage action

In this step we are moving the downloaded package into the blob storage.

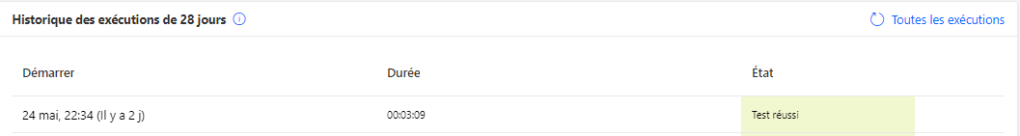

Test the flow

To test your flow, select the Test button in the designer. You will see that the steps of your Power automate flow start to run. here after 3 minutes 09 seconds.

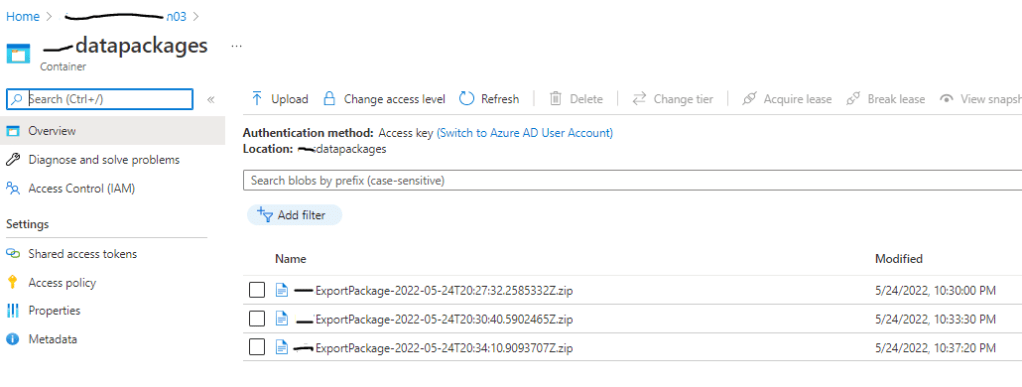

The flow finish running, and my data package has been well added into the pre-defined blob storage folder.

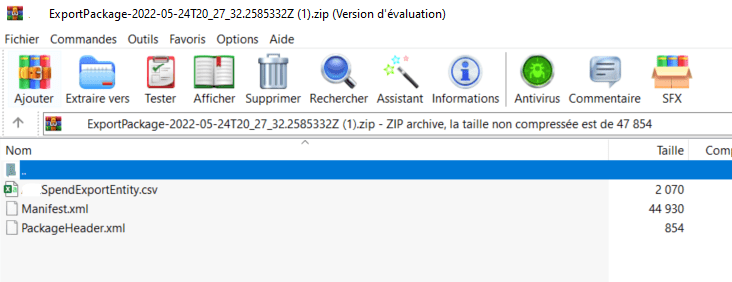

The exported Zip package contains the CSV file of my custom Spend data entity from D365FinOps

3 commentaires sur “Data export via Power automate and Data management package API in D365FinOps”